Operationalizing GeoAI — scaling, integration and challenges¶

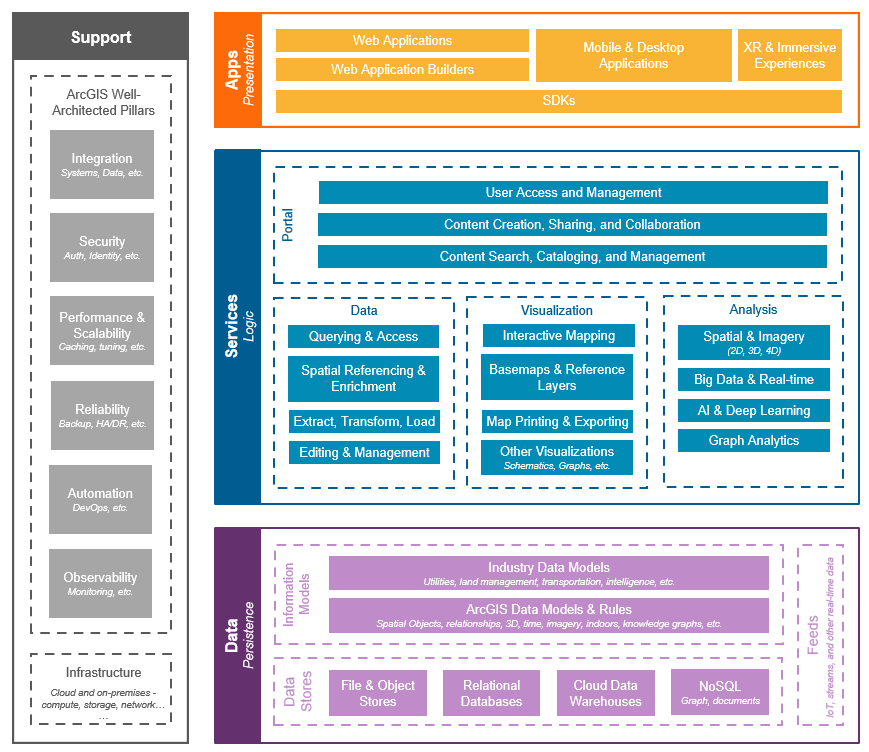

GeoAI in an Enterprise GeoICT Architecture¶

GeoAI becomes operational only when it is embedded within an organization’s broader GeoICT ecosystem. It should not be viewed as a standalone technology but as a specialized analytical capability that interacts with existing spatial data infrastructures, enterprise GIS systems, and IT governance frameworks.

For project managers, the key shift is from thinking about models and experiments to thinking about systems, services, and sustained operations.

Where GeoAI Fits in a Spatial Data Infrastructure (SDI)¶

A typical Spatial Data Infrastructure (SDI) consists of several layers:

- Data layer — authoritative spatial datasets such as base maps, imagery archives, elevation models, and thematic layers

- Service layer — web services that provide access to data (maps, features, tiles)

- Application layer — GIS software, dashboards, and decision-support tools

- Governance layer — standards, metadata, policies, and data stewardship

GeoAI fits primarily as an analytical processing component that sits between the data layer and the service/application layers.

In this role, GeoAI:

- Consumes large volumes of raw or preprocessed geospatial data

- Produces derived datasets such as extracted features, classifications, or predictions

- Feeds these outputs back into the SDI as new data layers or services

Manager’s perspective: GeoAI should be planned as part of the SDI architecture, with defined inputs, outputs, and responsibilities. It is not an isolated research activity but a production workflow that generates new authoritative or semi-authoritative data products.

GeoAI as a Processing Layer, Not a Replacement for GIS¶

A common misconception is that GeoAI will replace GIS. In reality, GeoAI extends GIS by automating certain types of pattern recognition and large-scale analysis.

GIS remains essential for:

- Data management and stewardship

- Spatial editing and quality control

- Cartographic production

- Integration of multiple thematic layers

GeoAI contributes by:

- Automating feature extraction from imagery

- Generating predictive or classification surfaces

- Processing volumes of data that would be impractical to handle manually

From an architectural perspective, GeoAI is best understood as a processing layer that transforms raw geospatial data into higher-level information products. These products then re-enter the GIS ecosystem, where they are validated, integrated, and disseminated.

Manager’s perspective: Investments in GeoAI should strengthen, not replace, existing GIS capabilities. The most effective systems are those where AI-generated outputs flow smoothly into established GIS workflows and data governance structures.

From Pilot Project to Operational System¶

Many GeoAI initiatives begin as experiments or proofs of concept. These early efforts are valuable for demonstrating feasibility, but they do not automatically translate into reliable operational systems. Moving from a pilot to production requires changes in mindset, processes, and technical design.

For managers, the transition involves shifting focus from “Does this work?” to “Can this work consistently, at scale, and over time?”

Differences Between Research, Pilot, and Production¶

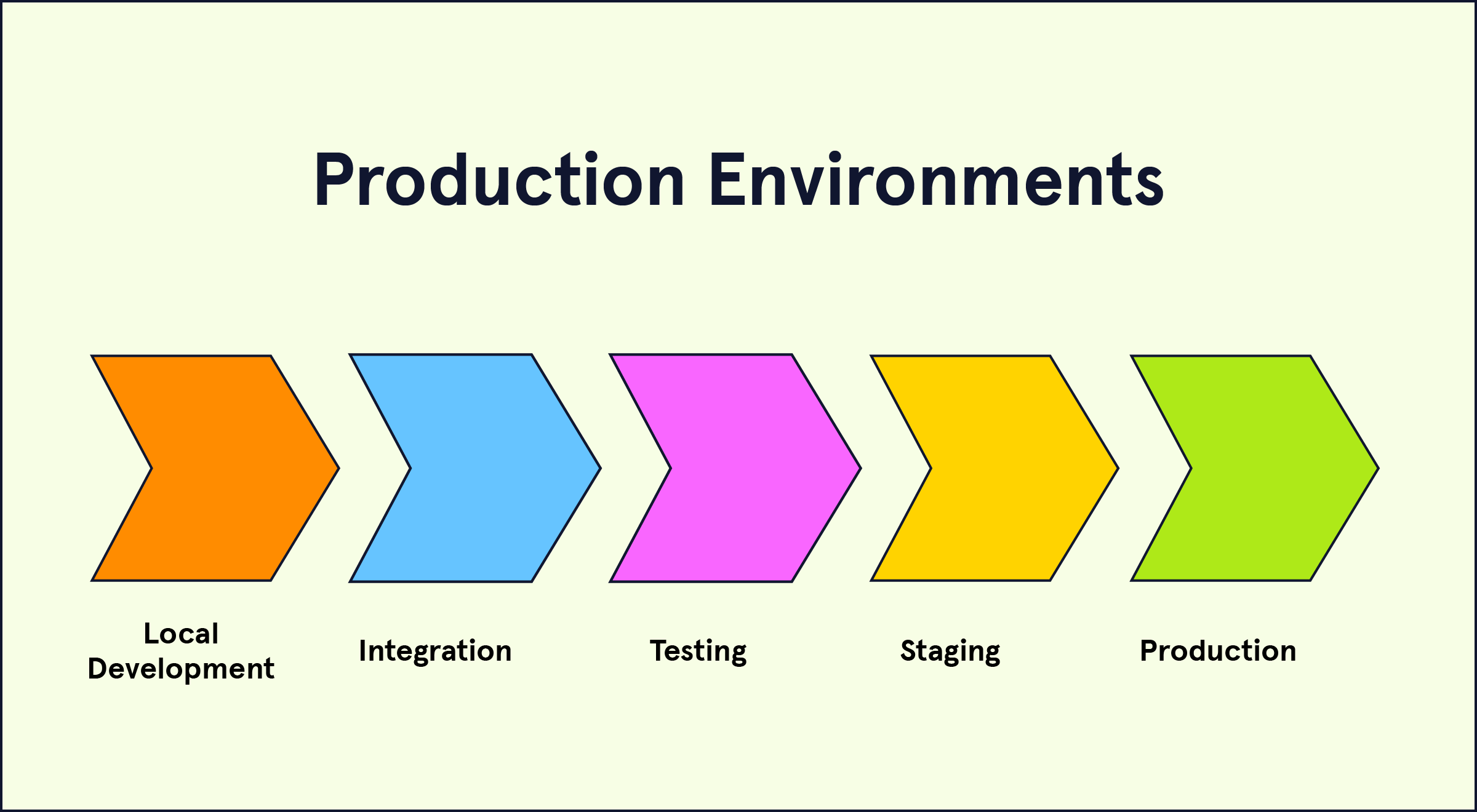

GeoAI efforts typically pass through three stages:

Research stage: Focuses on testing ideas, comparing algorithms, and exploring what is technically possible. Data may be limited, and workflows are often informal.

Pilot stage: Applies a selected approach to a defined area or problem. The goal is to assess performance under more realistic conditions and identify practical constraints.

Production stage: Implements a stable, repeatable workflow that operates on a regular schedule and integrates with enterprise systems.

Key differences include:

- Research emphasizes flexibility; production emphasizes reliability

- Pilots tolerate manual steps; production requires automation

- Research accepts uncertainty; production requires defined quality standards

Manager takeaway: A successful pilot does not automatically mean a system is ready for operational use. Additional work is needed to formalize and stabilize the workflow.

Stability vs Experimentation¶

In research and pilot phases, frequent changes to models, parameters, and data are common. In operational environments, excessive change can create inconsistency and reduce trust in outputs.

Operational GeoAI systems require:

- Stable model versions for defined periods

- Controlled updates after testing and validation

- Clear documentation of any changes

Experimentation should continue, but it should occur in separate development environments, not directly in production systems.

Manager takeaway: Operational systems balance innovation with stability. Changes must be managed carefully to avoid disrupting established workflows.

Repeatability and Standard Operating Procedures¶

In operational GeoAI, processes must be repeatable. The same inputs and procedures should produce consistent outputs.

This requires:

- Documented workflows describing each processing step

- Automated pipelines for data preparation, model execution, and output generation

- Defined schedules (for example, monthly or seasonal processing)

- Procedures for quality checks and approvals

Standard Operating Procedures (SOPs) ensure continuity even when staff change and help maintain consistent product quality.

Manager takeaway: Operational success depends on documented, automated, and repeatable workflows rather than ad hoc processing.

Scaling GeoAI Workflows¶

A GeoAI workflow that works for a small test area may not automatically scale to larger regions. Scaling introduces challenges related to data volume, compute requirements, workflow management, and quality control. Operational GeoAI must be designed from the beginning with scale in mind.

For managers, scaling is about ensuring that performance, reliability, and timelines remain acceptable as coverage expands.

Scaling from One District to Nationwide Coverage¶

A pilot project may process imagery for a single district or city. National or state-level implementations multiply data volume and processing time dramatically.

Key differences at larger scales include:

- Increased storage for imagery and intermediate products

- Longer processing times requiring parallel or distributed computing

- Greater variability in landscape, climate, and urban form

- More complex logistics for quality validation

Workflows must be designed to handle thousands of image tiles rather than a few scenes. Data organization and naming conventions become critical to avoid confusion.

Manager takeaway: Scaling is not just running the same script on more data. It requires rethinking data management, compute strategy, and validation approaches.

Batch Processing vs Continuous Monitoring¶

Operational GeoAI systems may follow different processing strategies.

Batch processing Data is processed at defined intervals, such as monthly, quarterly, or annually. This is common for land cover mapping or seasonal monitoring.

Continuous monitoring New imagery is processed as it becomes available, supporting near real-time detection of changes such as flooding, construction, or deforestation.

Each approach has implications:

- Batch processing simplifies planning and quality control

- Continuous monitoring requires more automation and faster turnaround

- Data storage and compute needs differ significantly

Manager takeaway: The choice between batch and continuous processing depends on operational needs, urgency of information, and available infrastructure.

Automation and Orchestration Concepts¶

As scale increases, manual execution of workflows becomes impractical. Automation and orchestration tools help manage complex, multi-step processes.

Automation involves:

- Automatically triggering preprocessing, model inference, and post-processing steps

- Scheduling workflows based on time or data availability

Orchestration involves:

- Coordinating multiple tasks in the correct order

- Managing dependencies between steps

- Monitoring workflow status and handling failures

These systems ensure that large-scale GeoAI processes run reliably and repeatedly without constant manual intervention.

Manager takeaway: Scaling GeoAI requires moving from manual processing to automated, managed pipelines that can handle large volumes of data efficiently.

Infrastructure Planning for Operational GeoAI¶

Operational GeoAI requires infrastructure that can reliably handle large data volumes, computationally intensive processing, and regular production cycles. Infrastructure decisions affect cost, performance, security, and long-term sustainability.

For managers, planning infrastructure means aligning technical capacity with operational goals, data policies, and budget constraints.

Compute Infrastructure (Cloud vs On-Premise)¶

GeoAI model training and large-scale inference often require high-performance computing, especially GPUs.

Cloud infrastructure Cloud platforms provide on-demand access to computing resources, including GPU-enabled instances. They allow organizations to scale up for large processing tasks and scale down afterward.

Advantages include:

- Flexibility and scalability

- Reduced need for upfront hardware investment

- Faster experimentation and deployment

On-premise infrastructure Some organizations prefer to maintain their own servers due to data sensitivity, regulatory requirements, or long-term cost considerations.

Advantages include:

- Greater control over data and systems

- Potential cost efficiency for sustained, high-volume processing

- Integration with existing enterprise IT environments

Hybrid approaches are also common, where sensitive data remains on-premise while large processing jobs run in the cloud.

Manager takeaway: The choice between cloud and on-premise depends on data governance, budget model, technical expertise, and expected workload variability.

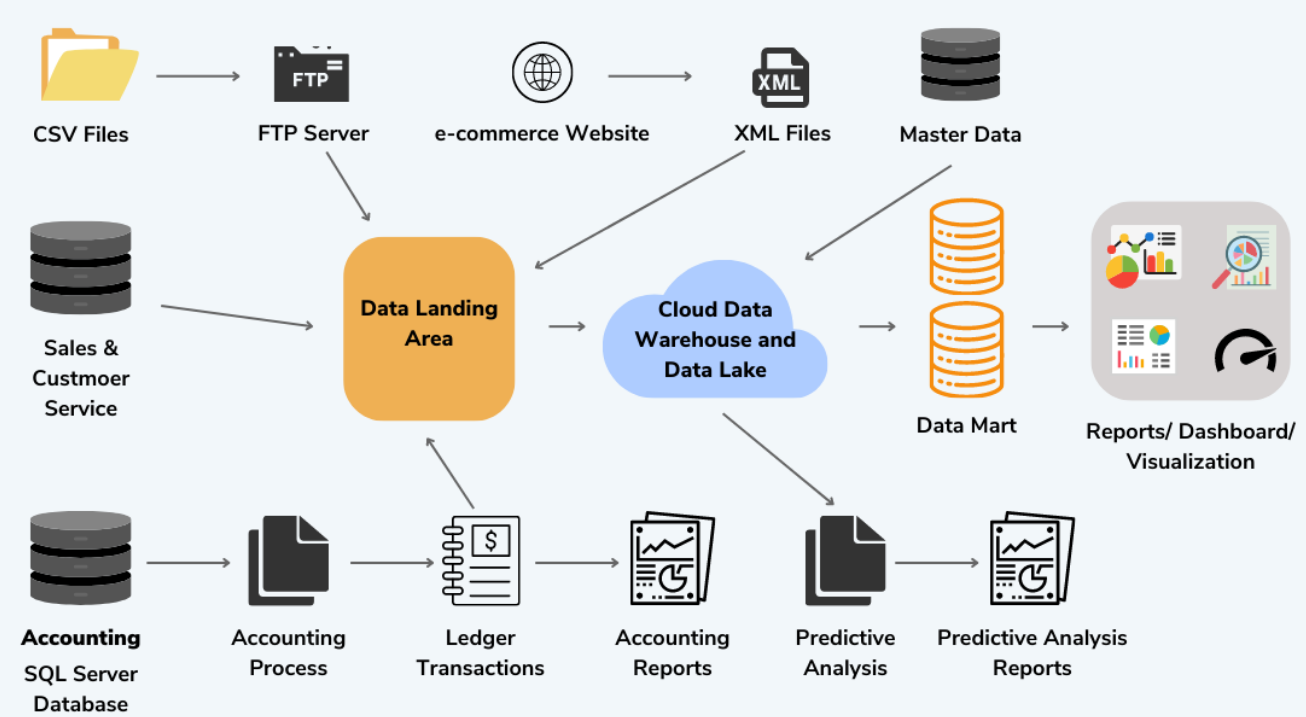

Storage Requirements and Data Flow¶

GeoAI workflows generate and consume large volumes of data, including raw imagery, intermediate products, training datasets, and final outputs.

Key considerations include:

- Sufficient storage capacity for both raw and processed data

- Efficient organization of imagery tiles and metadata

- Backup and archival strategies for long-term retention

- Clear data flow between acquisition, processing, and publication systems

Poor data organization can lead to duplication, confusion, and processing delays.

Manager takeaway: Storage planning is not just about capacity but also about structure, access patterns, and lifecycle management of datasets.

Network and Data Transfer Considerations¶

Large geospatial datasets can be difficult to move between systems. Network speed and reliability directly affect processing timelines.

Important factors include:

- Bandwidth for transferring imagery from storage to processing environments

- Latency when accessing cloud-hosted data from local systems

- Strategies to minimize unnecessary data movement

- Co-locating storage and compute resources when possible

For example, moving terabytes of imagery across slow networks can delay processing more than the model computation itself.

Manager takeaway: Data transfer can become a hidden bottleneck. Infrastructure design should minimize movement of large datasets and place processing close to where data is stored.

Integration with Existing GIS Systems¶

Operational GeoAI does not exist in isolation. Its outputs must integrate smoothly with existing enterprise GIS platforms, spatial databases, and web services. Without proper integration, AI-generated layers remain disconnected products rather than part of the organization’s authoritative geospatial ecosystem.

For managers, integration ensures that GeoAI results become usable assets within established workflows and systems.

Bringing AI Outputs into Enterprise GIS¶

AI outputs such as classified rasters, probability surfaces, and extracted vector features must be incorporated into enterprise GIS environments.

This typically involves:

- Storing outputs in spatial databases or file repositories

- Registering layers in GIS servers for access by users

- Applying consistent naming conventions and metadata standards

Outputs may be used as:

- New thematic layers

- Inputs for further spatial analysis

- Updates to existing datasets after validation

Manager takeaway: GeoAI outputs should follow the same data management and publication standards as other enterprise GIS layers.

Interoperability with OGC Services¶

Interoperability ensures that AI-derived data can be accessed through standard geospatial services.

Publishing outputs through OGC-compliant services allows them to be used in:

- Desktop GIS applications

- Web mapping platforms

- External partner systems

Using open standards avoids vendor lock-in and supports integration across different platforms.

Manager takeaway: Adhering to interoperability standards ensures that GeoAI products can be widely accessed and reused within and beyond the organization.

Maintaining Consistency with Authoritative Data¶

AI-generated outputs may overlap with or update existing authoritative datasets. Managing this relationship is critical.

Key practices include:

- Comparing AI outputs with existing official layers

- Defining procedures for updating authoritative data based on AI results

- Maintaining clear versioning and documentation of updates

AI results should be treated as candidate data that undergoes validation before being designated as authoritative.

Manager takeaway: Consistency with official datasets maintains trust and prevents conflicting information within the enterprise GIS environment.

Model Lifecycle Management¶

Operational GeoAI systems are not static. Models that perform well today may degrade over time as landscapes change, data sources evolve, or operational requirements shift. Managing the lifecycle of models is therefore essential for maintaining reliability and trust.

For managers, model lifecycle management ensures that GeoAI systems remain accurate, traceable, and aligned with current conditions.

Model Versioning and Updates¶

Each trained model should be treated as a versioned asset, similar to software releases.

Versioning involves:

- Assigning identifiers to each model version

- Recording the training data version, parameters, and date

- Archiving previous models rather than overwriting them

Updates should follow a controlled process:

- New versions are tested before deployment

- Changes are documented and communicated

- Rollback to earlier versions remains possible if issues arise

Manager takeaway: Version control enables traceability, accountability, and safe updates in operational environments.

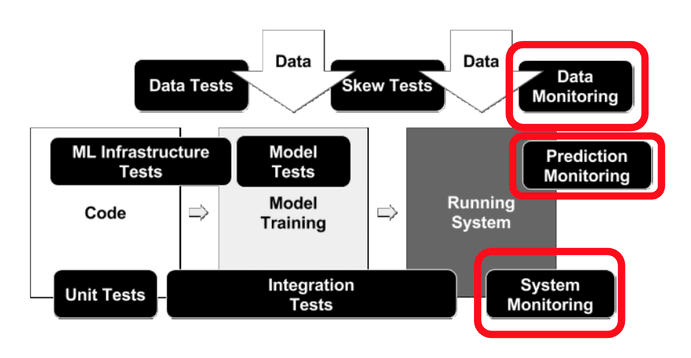

Monitoring Model Performance Over Time¶

Model performance can change as new imagery, seasons, or land use patterns appear. Continuous monitoring helps detect when a model is no longer performing as expected.

Monitoring may include:

- Periodic accuracy assessments using new validation samples

- Tracking error rates for critical feature classes

- Detecting shifts in input data characteristics

Performance reports should be reviewed regularly to ensure the model remains suitable for operational use.

Manager takeaway: Ongoing monitoring prevents unnoticed degradation and supports evidence-based decisions about model updates.

Retraining Strategies When Conditions Change¶

When significant changes occur — such as new construction patterns, seasonal differences, or new data sources — models may need retraining.

Retraining strategies include:

- Adding new labeled data that reflects changed conditions

- Expanding geographic coverage of training samples

- Updating models at defined intervals (for example, annually)

Retraining should follow the same governance and validation processes as initial model development.

Manager takeaway: GeoAI systems require periodic refresh to stay aligned with evolving real-world conditions.

Quality Assurance and Validation at Scale¶

As GeoAI systems scale to larger areas and more frequent updates, manual inspection of every output becomes impractical. However, quality assurance cannot be removed from the workflow. Instead, it must be redesigned to operate efficiently at scale.

For managers, large-scale validation is about balancing automation with targeted human oversight to ensure that AI outputs remain reliable and fit for purpose.

Human-in-the-Loop Systems¶

In operational GeoAI, humans remain essential in supervising automated outputs.

Human-in-the-loop systems involve:

- Reviewing samples of AI-generated features

- Correcting systematic errors

- Providing feedback that can improve future model versions

Rather than replacing experts, GeoAI shifts their role from manual digitizing to targeted review and decision-making.

Manager takeaway: Human oversight is a design component of operational systems, not an optional extra.

Sampling-Based Quality Checks¶

Since full manual review is infeasible at scale, quality assessment often relies on statistically designed sampling.

This may include:

- Selecting random or stratified sample areas for review

- Comparing AI outputs with reference data or higher-resolution imagery

- Estimating accuracy metrics for different feature classes

Sampling provides a realistic estimate of performance without requiring complete manual inspection.

Manager takeaway: Well-designed sampling allows organizations to quantify quality while keeping validation effort manageable.

Handling Uncertainty in AI Outputs¶

AI models do not produce perfect or certain results. Many models generate confidence or probability values alongside predictions.

Operational systems can use this information to:

- Flag low-confidence areas for human review

- Prioritize validation resources

- Communicate uncertainty to end users

Ignoring uncertainty can lead to overconfidence in automated outputs and inappropriate decision-making.

Manager takeaway: Uncertainty is a normal part of AI outputs and should be managed explicitly rather than ignored.

Risks and Challenges in Operational GeoAI¶

Operational GeoAI systems influence maps, statistics, and decisions that may carry financial, environmental, or legal consequences. As a result, risks must be actively managed rather than treated as purely technical issues.

For managers, recognizing these risks early helps design systems that are resilient, transparent, and aligned with organizational responsibilities.

Data Drift and Changing Conditions¶

Data drift occurs when the characteristics of input data change over time compared to the data used to train the model.

In geospatial contexts, drift can result from:

- Urban expansion and new construction patterns

- Seasonal differences in vegetation and water levels

- Changes in sensor types or image resolution

When drift is not addressed, model performance may degrade gradually without being immediately noticed.

Manager takeaway: Operational GeoAI requires periodic monitoring and retraining to remain aligned with evolving real-world conditions.

Overconfidence in Automated Outputs¶

Automated outputs can create a false sense of precision, especially when presented as clean maps without visible uncertainty.

Risks include:

- Treating AI-generated layers as fully authoritative without validation

- Ignoring known model limitations in specific environments

- Making high-stakes decisions based solely on automated outputs

Human review, uncertainty communication, and documented limitations are necessary safeguards.

Manager takeaway: GeoAI accelerates analysis but does not remove the need for expert judgment.

Legal and Accountability Considerations¶

When GeoAI outputs inform policy, regulation, or public communication, questions of responsibility and traceability arise.

Important considerations include:

- Documenting data sources and processing methods

- Maintaining audit trails of model versions and outputs

- Clarifying whether AI outputs are advisory or authoritative

Organizations must be able to explain how results were generated and who is responsible for their use.

Manager takeaway: Operational GeoAI systems should be designed with transparency, documentation, and clear accountability structures from the outset.

Governance, Documentation, and Auditability¶

Operational GeoAI systems must be explainable, maintainable, and defensible over time. Unlike short-term pilot projects, production systems may be used for years and may support decisions with legal or policy implications. Strong governance ensures continuity, accountability, and institutional trust.

For managers, governance is the framework that allows GeoAI to function as a reliable part of the enterprise GeoICT ecosystem.

Traceability of Data and Models¶

Traceability means being able to follow the full chain from raw input data to final output products.

This includes:

- Recording which datasets were used for training and inference

- Tracking preprocessing steps applied to imagery and labels

- Linking each model version to its training data and parameters

Traceability supports:

- Audits and external reviews

- Reproducing results when needed

- Understanding the impact of data or model updates

Manager takeaway: If a result cannot be traced back to its source data and model version, it cannot be fully trusted in operational contexts.

Documentation for Long-Term Maintenance¶

Operational GeoAI systems must remain functional even as staff and technologies change.

Key documentation should cover:

- System architecture and data flow

- Model training and deployment procedures

- Data preparation workflows

- Quality assurance and validation methods

Clear documentation ensures that:

- New team members can understand and maintain the system

- Updates can be performed safely

- Knowledge is not lost when personnel change

Manager takeaway: Documentation is not an administrative burden; it is a core part of system sustainability.

Transparency Requirements in Government Contexts¶

In government and public-sector environments, transparency is especially important.

Stakeholders may need to understand:

- How AI-generated layers were created

- What data sources were used

- What limitations or uncertainties exist

Providing clear explanations and accessible metadata builds trust and supports responsible use of GeoAI outputs in public decision-making.

Manager takeaway: Transparency strengthens credibility and helps ensure that GeoAI systems are accepted by both internal and external stakeholders.

Cost and Sustainability Considerations¶

GeoAI projects often begin with enthusiasm for automation and innovation, but long-term success depends on realistic financial and operational planning. Unlike one-time mapping exercises, operational GeoAI systems introduce recurring costs and ongoing responsibilities.

For managers, sustainability is about ensuring that GeoAI initiatives remain affordable, maintainable, and aligned with long-term organizational goals.

Compute and Storage Costs¶

GeoAI processing can be computationally intensive, especially when working with high-resolution imagery or large geographic areas.

Compute costs may include:

- Cloud GPU usage for model training and large-scale inference

- Virtual machines or servers for preprocessing and post-processing

- Costs associated with scaling during peak processing periods

Storage costs often grow steadily over time due to:

- Archiving raw imagery and intermediate products

- Storing multiple versions of training datasets

- Maintaining historical outputs for comparison and audit

These costs are not always obvious during pilot phases but become significant in long-term operations.

Manager takeaway: Compute and storage costs should be estimated not just for initial deployment but for sustained, repeated operations over years.

Long-Term Maintenance vs One-Time Projects¶

A one-time GeoAI project may produce a map or dataset for a specific purpose. An operational system, however, must be maintained continuously.

Ongoing responsibilities include:

- Updating training data as landscapes change

- Retraining and validating models periodically

- Maintaining data pipelines and infrastructure

- Supporting users and responding to issues

Staff time and expertise become recurring requirements, not one-time investments.

Organizations sometimes underestimate these ongoing commitments, leading to systems that degrade or become unused after initial deployment.

Manager takeaway: Before launching operational GeoAI, organizations should plan for multi-year support, including staff, infrastructure, and periodic system updates.